Finding Data Quality

/Have you ever experienced that sinking feeling, where you sense if you don’t find data quality, then data quality will find you?

In the spring of 2003, Pixar Animation Studios produced one of my all-time favorite Walt Disney Pictures—Finding Nemo.

This blog post is an hommage to not only the film, but also to the critically important role into which data quality is cast within all of your enterprise information initiatives, including business intelligence, master data management, and data governance.

I hope that you enjoy reading this blog post, but most important, I hope you always remember: “Data are friends, not food.”

Data Silos

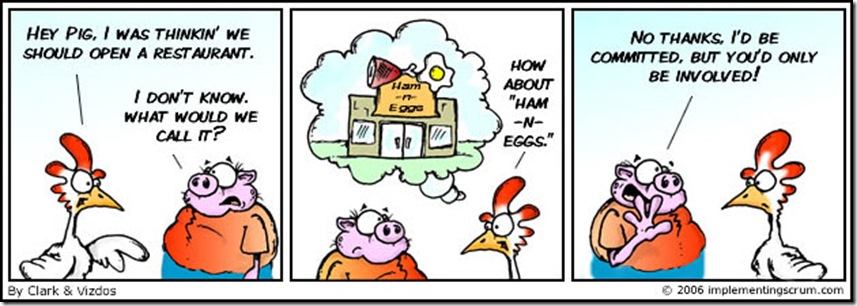

“Mine! Mine! Mine! Mine! Mine!”

That’s the Data Silo Mantra—and it is also the bane of successful enterprise information management. Many organizations persist on their reliance on vertical data silos, where each and every business unit acts as the custodian of their own private data—thereby maintaining their own version of the truth.

Impressive business growth can cause an organization to become a victim of its own success. Significant collateral damage can be caused by this success, and most notably to the organization’s burgeoning information architecture.

Earlier in an organization’s history, it usually has fewer systems and easily manageable volumes of data, thereby making managing data quality and effectively delivering the critical information required to make informed business decisions everyday, a relatively easy task where technology can serve business needs well—especially when the business and its needs are small.

However, as the organization grows, it trades effectiveness for efficiency, prioritizing short-term tactics over long-term strategy, and by seeing power in the hoarding of data, not in the sharing of information, the organization chooses business unit autonomy over enterprise-wide collaboration—and without this collaboration, successful enterprise information management is impossible.

A data silo often merely represents a microcosm of an enterprise-wide problem—and this truth is neither convenient nor kind.

Data Profiling

“I see a light—I’m feeling good about my data . . .

Good feeling’s gone—AHH!”

Although it’s not exactly a riddle wrapped in a mystery inside an enigma, understanding your data is essential to using it effectively and improving its quality—to achieve these goals, there is simply no substitute for data analysis.

Data profiling can provide a reality check for the perceptions and assumptions you may have about the quality of your data. A data profiling tool can help you by automating some of the grunt work needed to begin your analysis.

However, it is important to remember that the analysis itself can not be automated—you need to translate your analysis into the meaningful reports and questions that will facilitate more effective communication and help establish tangible business context.

Ultimately, I believe the goal of data profiling is not to find answers, but instead, to discover the right questions.

Discovering the right questions requires talking with data’s best friends—its stewards, analysts, and subject matter experts. These discussions are a critical prerequisite for determining data usage, standards, and the business relevant metrics for measuring and improving data quality. Always remember that well performed data profiling is highly interactive and a very iterative process.

Defect Prevention

“You, Data-Dude, takin’ on the defects.

You’ve got serious data quality issues, dude.

Awesome.”

Even though it is impossible to truly prevent every problem before it happens, proactive defect prevention is a highly recommended data quality best practice because the more control enforced where data originates, the better the overall quality will be for enterprise information.

Although defect prevention is most commonly associated with business and technical process improvements, after identifying the burning root cause of your data defects, you may predictably need to apply some of the principles of behavioral data quality.

In other words, understanding the complex human dynamics often underlying data defects is necessary for developing far more effective tactics and strategies for implementing successful and sustainable data quality improvements.

Data Cleansing

“Just keep cleansing. Just keep cleansing.

Just keep cleansing, cleansing, cleansing.

What do we do? We cleanse, cleanse.”

That’s not the Data Cleansing Theme Song—but it can sometimes feel like it. Especially whenever poor data quality negatively impacts decision-critical information, the organization may legitimately prioritize a reactive short-term response, where the only remediation will be fixing the immediate problems.

Balancing the demands of this data triage mentality with the best practice of implementing defect prevention wherever possible, will often create a very challenging situation for you to contend with on an almost daily basis.

Therefore, although comprehensive data remediation will require combining reactive and proactive approaches to data quality, you need to be willing and able to put data cleansing tools to good use whenever necessary.

Communication

“It’s like he’s trying to speak to me, I know it.

Look, you’re really cute, but I can’t understand what you’re saying.

Say that data quality thing again.”

I hear this kind of thing all the time (well, not the “you’re really cute” part).

Effective communication improves everyone’s understanding of data quality, establishes a tangible business context, and helps prioritize critical data issues.

Keep in mind that communication is mostly about listening. Also, be prepared to face “data denial” when data quality problems are discussed. Most often, this is a natural self-defense mechanism for the people responsible for business processes, technology, and data—and because of the simple fact that nobody likes to feel blamed for causing or failing to fix the data quality problems.

The key to effective communication is clarity. You should always make sure that all data quality concepts are clearly defined and in a language that everyone can understand. I am not just talking about translating the techno-mumbojumbo, because even business-speak can sound more like business-babbling—and not just to the technical folks.

Additionally, don’t be afraid to ask questions or admit when you don’t know the answers. Many costly mistakes can be made when people assume that others know (or pretend to know themselves) what key concepts and other terminology actually mean.

Never underestimate the potential negative impacts that the point of view paradox can have on communication. For example, the perspectives of the business and technical stakeholders can often appear to be diametrically opposed.

Practicing effective communication requires shutting our mouth, opening our ears, and empathically listening to each other, instead of continuing to practice ineffective communication, where we merely take turns throwing word-darts at each other.

Collaboration

“Oh and one more thing:

When facing the daunting challenge of collaboration,

Work through it together, don't avoid it.

Come on, trust each other on this one.

Yes—trust—it’s what successful teams do.”

Most organizations suffer from a lack of collaboration, and as noted earlier, without true enterprise-wide collaboration, true success is impossible.

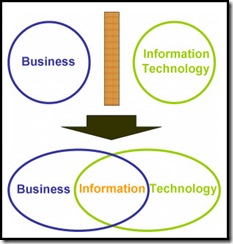

Beyond the data silo problem, the most common challenge for collaboration is the divide perceived to exist between the Business and IT, where the Business usually owns the data and understands its meaning and use in the day-to-day operation of the enterprise, and IT usually owns the hardware and software infrastructure of the enterprise’s technical architecture.

However, neither the Business nor IT alone has all of the necessary knowledge and resources required to truly be successful. Data quality requires that the Business and IT forge an ongoing and iterative collaboration.

You must rally the team that will work together to improve the quality of your data. A cross-disciplinary team will truly be necessary because data quality is neither a business issue nor a technical issue—it is both, truly making it an enterprise issue.

Executive sponsors, business and technical stakeholders, business analysts, data stewards, technology experts, and yes, even consultants and contractors—only when all of you are truly working together as a collaborative team, can the enterprise truly achieve great things, both tactically and strategically.

Successful enterprise information management is spelled E—A—C.

Of course, that stands for Enterprises—Always—Collaborate. The EAC can be one seriously challenging place, dude.

You don’t know if you know what they know, or if they know what you know, but when you know, then they know, you know?

It’s like first you are all like “Whoa!” and they are all like “Whoaaa!” then you are like “Sweet!” and then they are like “Totally!”

This critical need for collaboration might seem rather obvious. However, as all of the great philosophers have taught us, sometimes the hardest thing to learn is the least complicated.

Okay. Squirt will now give you a rundown of the proper collaboration technique:

“Good afternoon. We’re gonna have a great collaboration today.

Okay, first crank a hard cutback as you hit the wall.

There’s a screaming bottom curve, so watch out.

Remember: rip it, roll it, and punch it.”

Finding Data Quality

As more and more organizations realize the critical importance of viewing data as a strategic corporate asset, data quality is becoming an increasingly prevalent topic of discussion.

However, and somewhat understandably, data quality is sometimes viewed as a small fish—albeit with a “lucky fin”—in a much larger pond.

In other words, data quality is often discussed only in its relation to enterprise information initiatives such as data integration, master data management, data warehousing, business intelligence, and data governance.

There is nothing wrong with this perspective, and as a data quality expert, I admit to my general tendency to see data quality in everything. However, regardless of the perspective from which you begin your journey, I believe that eventually you will be Finding Data Quality wherever you look as well.

I grew up and lived most of my life in the suburbs of Boston, Massachusetts. But just prior to relocating to the Midwest for work seven years ago, I lived in Derry, New Hampshire, just down the road from the

I grew up and lived most of my life in the suburbs of Boston, Massachusetts. But just prior to relocating to the Midwest for work seven years ago, I lived in Derry, New Hampshire, just down the road from the