Recently, a Twitter-related tête à tête à tête involving David Carr of The New York Times, Nick Bilton of The New York Times, and George Packer of The New Yorker temporarily made both the Blogosphere all abuzz and the Twitterverse all atwitter.

This was simply another entry in the deeply polarizing debate between those for (Carr and Bilton) and against (Packer) Twitter.

A new decade of debate begins

On January 1, 2010, David Carr published his thoughts in the article Why Twitter Will Endure:

“By carefully curating the people you follow, Twitter becomes an always-on data stream from really bright people in their respective fields, whose tweets are often full of links to incredibly vital, timely information.”

. . .

“Nearly a year in, I’ve come to understand that the real value of the service is listening to a wired collective voice.”

. . .

“At first, Twitter can be overwhelming, but think of it as a river of data rushing past that I dip a cup into every once in a while. Much of what I need to know is in that cup . . . I almost always learn about it first on Twitter.”

. . .

“All those riches do not come at zero cost: If you think e-mail and surfing can make time disappear, wait until you get ahold of Twitter, or more likely, it gets ahold of you. There is always something more interesting on Twitter than whatever you happen to be working on.”

Carr goes on to quote Clay Shirky, author of the book Here Comes Everybody:

“It will be hard to wait out Twitter because it is lightweight, endlessly useful and gets better as more people use it. Brands are using it, institutions are using it, and it is becoming a place where a lot of important conversations are being held.”

The most frightening picture of the future

On January 29, 2010, in his blog post Stop the World, George Packer declared that “the most frightening picture of the future that I’ve read thus far in the new decade has nothing to do with terrorism or banking or the world’s water reserves.”

What was the most frightening picture of the future that Packer had read less than a month into the new decade?

The aforementioned article by David Carr—no, I am not kidding.

“Every time I hear about Twitter,” wrote Packer, “I want to yell Stop! The notion of sending and getting brief updates to and from dozens or thousands of people every few minutes is an image from information hell. I’m told that Twitter is a river into which I can dip my cup whenever I want. But that supposes we’re all kneeling on the banks. In fact, if you’re at all like me, you’re trying to keep your footing out in midstream, with the water level always dangerously close to your nostrils. Twitter sounds less like sipping than drowning.”

Someone who admits that he has, in fact, never even used Twitter, continued with a crack addiction analogy:

“Who doesn’t want to be taken out of the boredom or sameness or pain of the present at any given moment? That’s what drugs are for, and that’s why people become addicted to them.

Carr himself was once a crack addict (he wrote about it in The Night of the Gun). Twitter is crack for media addicts.

It scares me, not because I’m morally superior to it, but because I don’t think I could handle it. I’m afraid I’d end up letting my son go hungry.”

“Call me a digital crack dealer”

On February 3, 2010, in his blog post, The Twitter Train Has Left the Station, Nick Bilton responded:

“Call me a digital crack dealer, but here’s why Twitter is a vital part of the information economy—and why Mr. Packer and other doubters ought to at least give it a Tweet:

Hundreds of thousands of people now rely on Twitter every day for their business. Food trucks and restaurants around the world tell patrons about daily food specials. Corporations use the service to handle customer service issues. Starbucks, Dell, Ford, JetBlue and many more companies use Twitter to offer discounts and coupons to their customers. Public relations firms, ad agencies, schools, the State Department—even President Obama—use Twitter and other social networks to share information.”

. . .

“Most importantly, Twitter is transforming the nature of news, the industry from which Mr. Packer reaps his paycheck. The news media are going through their most robust transformation since the dawn of the printing press, in large part due to the Internet and services like Twitter. After this metamorphosis takes place, everyone will benefit from the information moving swiftly around the globe.”

Bilton concludes his post with a train analogy:

“Ironically, Mr. Packer notes how much he treasures his Amtrak rides in the quiet car of the train, with his laptop closed and cellphone turned off. As I’ve found in previous research, when trains were a new technology 150 years ago, some journalists and intellectuals worried about the destruction that the railroads would bring to society. One news article at the time warned that trains would ‘blight crops with their smoke, terrorize livestock … and people could asphyxiate’ if they traveled on them.

I wonder if, 150 years ago, Mr. Packer would be riding the train at all, or if he would have stayed home, afraid to engage in an evolving society and demanding that the trains be stopped.”

Our apparent appetite for our own destruction

On February 4, 2010, in his blog post Neither Luddite nor Biltonite, George Packer responded:

“It’s true that I hadn’t used Twitter (not consciously, anyway—my editors inform me that this blog has for some time had an automated Twitter feed). I haven’t used crack, either, but—as a Bilton reader pointed out—you don’t need to do the drug to understand the effects.”

. . .

“Just about everyone I know complains about the same thing when they’re being honest—including, maybe especially, people whose business is reading and writing. They mourn the loss of books and the loss of time for books. It’s no less true of me, which is why I’m trying to place a few limits on the flood of information that I allow into my head.”

. . .

“There’s no way for readers to be online, surfing, e-mailing, posting, tweeting, reading tweets, and soon enough doing the thing that will come after Twitter, without paying a high price in available time, attention span, reading comprehension, and experience of the immediately surrounding world. The Internet and the devices it’s spawned are systematically changing our intellectual activities with breathtaking speed, and more profoundly than over the past seven centuries combined. It shouldn’t be an act of heresy to ask about the trade-offs that come with this revolution.”

. . .

“The response to my post tells me that techno-worship is a triumphalist and intolerant cult that doesn’t like to be asked questions. If a Luddite is someone who fears and hates all technological change, a Biltonite is someone who celebrates all technological change: because we can, we must. I’d like to think that in 1860 I would have been an early train passenger, but I’d also like to think that in 1960 I’d have urged my wife to go off Thalidomide.”

. . .

“American newspapers and magazines will continue to die by the dozen. The economic basis for reporting (as opposed to information-sharing, posting, and Tweeting) will continue to erode. You have to be a truly hard-core techno-worshipper to call this robust. Any journalist who cheerleads uncritically for Twitter is essentially asking for his own destruction.”

. . .

“It’s true that Bilton will have news updates within seconds that reach me after minutes or hours or even days.

It’s a trade-off I can live with.”

Packer concludes his post by quoting the end of G. B. Trudeau's book My Shorts R Bunching. Thoughts?:

“The time you spend reading this tweet is gone, lost forever, carrying you closer to death. Am trying not to abuse the privilege.”

The Twitter Clockwork is NOT Orange

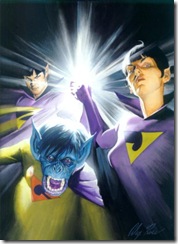

The primary propaganda used by the anti-Twitter lunatic fringe is comparing the microblogging and social networking service to that disturbing scene (pictured above) from the movie A Clockwork Orange, where you are confined within a straight jacket, your head strapped into a restraining chair preventing you from looking away, your eyes clamped to remain open—and you are forced to stare endlessly into the abyss of the cultural apocalypse that the Twitterverse is apparently supposed to represent.

You can feel free to call me a Biltonite, because I obviously agree far more with Bilton and Carr—and not with Packer.

Of course, I recommend you read all four of the articles/posts I linked to and selectively quoted above. Especially Carr's article, which was far more balanced than either my quotes or Packer's posts reflect.

Social Media Will Endure

We continue to witness the decline of print media and the corresponding evolution of social media. I completely understand why Packer (and others with a vested interest in print media) want to believe social media is a revolution that must be put down.

Hence the outrageous exaggerations Packer uses when comparing Twitter with drug abuse (crack cocaine) and the truly offensive remark of comparing Twitter with one of the worst medical tragedies in modern history (Thalidomide).

I believe the primary reason that social media will endure, beyond our increasing interest in exchanging what has traditionally been only a broadcast medium (print media) for a conversation medium, is because it is enabling our communication to return to the more direct and immediate forms of information sharing that existed even before the evolution of written language.

Social media is an evolution and not a revolution being forced upon society by unrelenting technological advancements and techno-worship. In many ways, social media is not a new concept at all—technology has simply finally caught up with us.

Humans have always been “social” by our very nature. We have always thrived on connection, conversation, and community.

Social media is rapidly evolving. Therefore, specific services like Twitter may be replaced (or Twitter may continue to evolve).

However, the essence of social media will endure—but the same can't be said of Packerites (neo-Luddites like George Packer).

What Say You?

Please share your thoughts on this debate by posting a comment below.

Or you can share your thoughts with me on Twitter—which reminds me, it's time for me to be strapped back into the chair . . .