There is No Such Thing as a Root Cause

/Root cause analysis. Most people within the industry, myself included, often discuss the importance of determining the root cause of data governance and data quality issues. However, the complex cause and effect relationships underlying an issue means that when an issue is encountered, often you are only seeing one of the numerous effects of its root cause (or causes).

In my post The Root! The Root! The Root Cause is on Fire!, I poked fun at those resistant to root cause analysis with the lyrics:

The Root! The Root! The Root Cause is on Fire!

We don’t want to determine why, just let the Root Cause burn.

Burn, Root Cause, Burn!

However, I think that the time is long overdue for even me to admit the truth — There is No Such Thing as a Root Cause.

Before you charge at me with torches and pitchforks for having an Abby Normal brain, please allow me to explain.

Defect Prevention, Mouse Traps, and Spam Filters

Some advocates of defect prevention claim that zero defects is not only a useful motivation, but also an attainable goal. In my post The Asymptote of Data Quality, I quoted Daniel Pink’s book Drive: The Surprising Truth About What Motivates Us:

“Mastery is an asymptote. You can approach it. You can home in on it. You can get really, really, really close to it. But you can never touch it. Mastery is impossible to realize fully.

The mastery asymptote is a source of frustration. Why reach for something you can never fully attain?

But it’s also a source of allure. Why not reach for it? The joy is in the pursuit more than the realization.

In the end, mastery attracts precisely because mastery eludes.”

The mastery of defect prevention is sometimes distorted into a belief in data perfection, into a belief that we can not just build a better mousetrap, but we can build a mousetrap that could catch all the mice, or that by placing a mousetrap in our garage, which prevents mice from entering via the garage, we somehow also prevent mice from finding another way into our house.

Obviously, we can’t catch all the mice. However, that doesn’t mean we should let the mice be like Pinky and the Brain:

Pinky: “Gee, Brain, what do you want to do tonight?”

The Brain: “The same thing we do every night, Pinky — Try to take over the world!”

My point is that defect prevention is not the same thing as defect elimination. Defects evolve. An excellent example of this is spam. Even conservative estimates indicate almost 80% of all e-mail sent world-wide is spam. A similar percentage of blog comments are spam, and spam generating bots are quite prevalent on Twitter and other micro-blogging and social networking services. The inconvenient truth is that as we build better and better spam filters, spammers create better and better spam.

Just as mousetraps don’t eliminate mice and spam filters don’t eliminate spam, defect prevention doesn’t eliminate defects.

However, mousetraps, spam filters, and defect prevention are essential proactive best practices.

There are No Lines of Causation — Only Loops of Correlation

There are no root causes, only strong correlations. And correlations are strengthened by continuous monitoring. Believing there are root causes means believing continuous monitoring, and by extension, continuous improvement, has an end point. I call this the defect elimination fallacy, which I parodied in song in my post Imagining the Future of Data Quality.

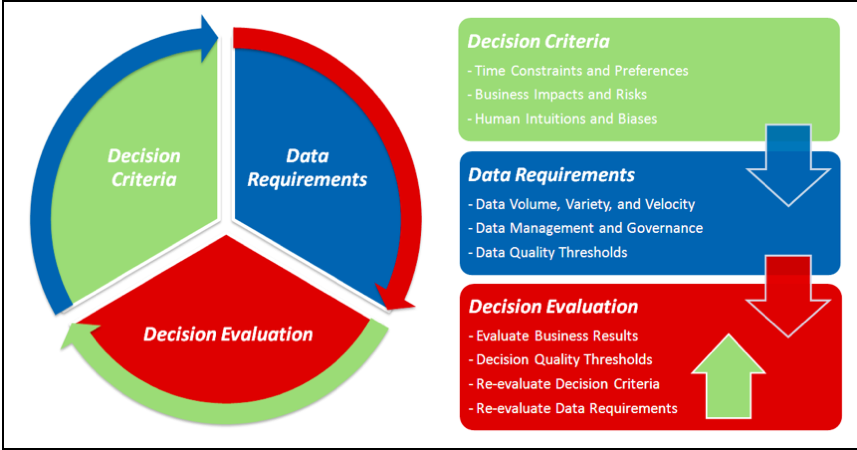

Knowing there are only strong correlations means knowing continuous improvement is an infinite feedback loop. A practical example of this reality comes from data-driven decision making, where:

- Better Business Performance is often correlated with

- Better Decisions, which, in turn, are often correlated with

- Better Data, which is precisely why Better Decisions with Better Data is foundational to Business Success — however . . .

This does not mean that we can draw straight lines of causation between (3) and (1), (3) and (2), or (2) and (1).

Despite our preference for simplicity over complexity, if bad data was the root cause of bad decisions and/or bad business performance, every organization would never be profitable, and if good data was the root cause of good decisions and/or good business performance, every organization could always be profitable. Even if good data was a root cause, not just a correlation, and even when data perfection is temporarily achieved, the effects would still be ephemeral because not only do defects evolve, but so does the business world. This evolution requires an endless revolution of continuous monitoring and improvement.

Many organizations implement data quality thresholds to close the feedback loop evaluating the effectiveness of their data management and data governance, but few implement decision quality thresholds to close the feedback loop evaluating the effectiveness of their data-driven decision making.

The quality of a decision is determined by the business results it produces, not the person who made the decision, the quality of the data used to support the decision, or even the decision-making technique. Of course, the reality is that business results are often not immediate and may sometimes be contingent upon the complex interplay of multiple decisions.

Even though evaluating decision quality only establishes a correlation, and not a causation, between the decision execution and its business results, it is still essential to continuously monitor data-driven decision making.

Although the business world will never be totally predictable, we can not turn a blind eye to the need for data-driven decision making best practices, or the reality that no best practice can eliminate the potential for poor data quality and decision quality, nor the potential for poor business results even despite better data quality and decision quality. Central to continuous improvement is the importance of closing the feedback loops that make data-driven decisions more transparent through better monitoring, allowing the organization to learn from its decision-making mistakes, and make adjustments when necessary.

We need to connect the dots of better business performance, better decisions, and better data by drawing loops of correlation.

Decision-Data Feedback Loop

Continuous improvement enables better decisions with better data, which drives better business performance — as long as you never stop looping the Decision-Data Feedback Loop, and start accepting that there is no such thing as a root cause.

I discuss this, and other aspects of data-driven decision making, in my DataFlux white paper, which is available for download (registration required) using the following link: Decision-Driven Data Management

Related Posts

The Root! The Root! The Root Cause is on Fire!

Bayesian Data-Driven Decision Making

The Role of Data Quality Monitoring in Data Governance

The Dichotomy Paradox, Data Quality and Zero Defects

Imagining the Future of Data Quality

What going to the Dentist taught me about Data Quality