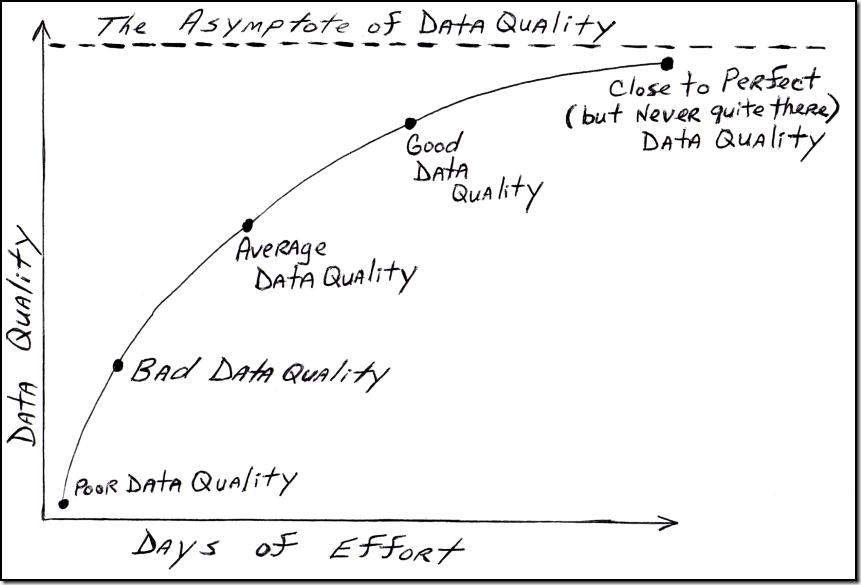

The Asymptote of Data Quality

/In analytic geometry (according to Wikipedia), an asymptote of a curve is a line such that the distance between the curve and the line approaches zero as they tend to infinity. The inspiration for my hand-drawn illustration was a similar one (not related to data quality) in the excellent book Linchpin: Are You Indispensable? by Seth Godin, which describes an asymptote as:

“A line that gets closer and closer and closer to perfection, but never quite touches.”

“As you get closer to perfection,” Godin explains, “it gets more and more difficult to improve, and the market values the improvements a little bit less. Increasing your free-throw percentage from 98 to 99 percent may rank you better in the record books, but it won’t win any more games, and the last 1 percent takes almost as long to achieve as the first 98 percent did.”

The pursuit of data perfection is a common debate in data quality circles, where it is usually known by the motto:

“The data will always be entered right, the first time, every time.”

However, Henrik Liliendahl Sørensen has cautioned that even when this ideal can be achieved, we must still acknowledge the inconvenient truth that things change, and Evan Levy has reminded us that data quality isn’t the same as data perfection, and David Loshin has used the Pareto principle to describe the point of diminishing returns in data quality improvements.

Chasing data perfection can be a powerful motivation, but it can also undermine the best of intentions. Not only is it important to accept that the Asymptote of Data Quality can never be reached, but we must realize that data perfection was never the goal.

The goal is data-driven solutions for business problems—and these dynamic problems rarely have (or require) a perfect solution.

Data quality practitioners must strive for continuous data quality improvement, but always within the business context of data, and without losing themselves in the pursuit of a data-myopic ideal such as data perfection.

Related Posts

Is your data complete and accurate, but useless to your business?

MacGyver: Data Governance and Duct Tape

You Can’t Always Get the Data You Want

What going to the dentist taught me about data quality

Data Quality and The Middle Way

Hyperactive Data Quality (Second Edition)

The Data Quality Goldilocks Zone