Measuring Data Quality for Ongoing Improvement

/OCDQ Radio is an audio podcast about data quality and its related disciplines, produced and hosted by Jim Harris.

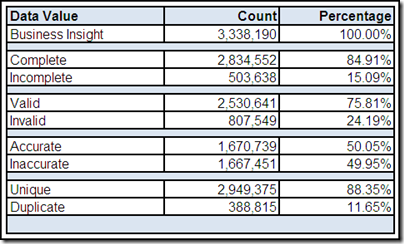

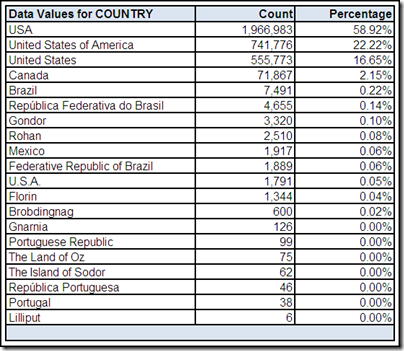

Listen to Laura Sebastian-Coleman, author of the book Measuring Data Quality for Ongoing Improvement: A Data Quality Assessment Framework, and I discuss bringing together a better understanding of what is represented in data, and how it is represented, with the expectations for use in order to improve the overall quality of data. Our discussion also includes avoiding two common mistakes made when starting a data quality project, and defining five dimensions of data quality.

Laura Sebastian-Coleman has worked on data quality in large health care data warehouses since 2003. She has implemented data quality metrics and reporting, launched and facilitated a data quality community, contributed to data consumer training programs, and has led efforts to establish data standards and to manage metadata. In 2009, she led a group of analysts in developing the original Data Quality Assessment Framework (DQAF), which is the basis for her book.

Laura Sebastian-Coleman has delivered papers at MIT’s Information Quality Conferences and at conferences sponsored by the International Association for Information and Data Quality (IAIDQ) and the Data Governance Organization (DGO). She holds IQCP (Information Quality Certified Professional) designation from IAIDQ, a Certificate in Information Quality from MIT, a B.A. in English and History from Franklin & Marshall College, and a Ph.D. in English Literature from the University of Rochester.

Popular OCDQ Radio Episodes

Clicking on the link will take you to the episode’s blog post:

- Demystifying Data Science — Guest Melinda Thielbar, a Ph.D. Statistician, discusses what a data scientist does and provides a straightforward explanation of key concepts such as signal-to-noise ratio, uncertainty, and correlation.

- Data Quality and Big Data — Guest Tom Redman (aka the “Data Doc”) discusses Data Quality and Big Data, including if data quality matters less in larger data sets, and if statistical outliers represent business insights or data quality issues.

- Doing Data Governance — Guest John Ladley discusses his book How to Design, Deploy and Sustain Data Governance and how to understand the difference and relationship between data governance and enterprise information management.

- Demystifying Master Data Management — Guest John Owens explains the three types of data (Transaction, Domain, Master), the four master data entities (Party, Product, Location, Asset), and the Party-Role Relationship, which is where we find many of the terms commonly used to describe the Party master data entity (e.g., Customer, Supplier, Employee).

- The Blue Box of Information Quality — Guest Daragh O Brien on why Information Quality is bigger on the inside, using stories as an analytical tool and change management technique, and why we must never forget that “people are cool.”

- Data Governance Star Wars — Special Guests Rob Karel and Gwen Thomas joined this extended, and Star Wars themed, discussion about how to balance bureaucracy and business agility during the execution of data governance programs.

- Good-Enough Data for Fast-Enough Decisions — Guest Julie Hunt discusses Data Quality and Business Intelligence, including the speed versus quality debate of near-real-time decision making, and the future of predictive analytics.

- The Johari Window of Data Quality — Guest Martin Doyle discusses helping people better understand their data and assess its business impacts, not just the negative impacts of bad data quality, but also the positive impacts of good data quality.

- The Art of Data Matching — Guest Henrik Liliendahl Sørensen discusses data matching concepts and practices, including different match techniques, candidate selection, presentation of match results, and business applications of data matching.

- Data Profiling Early and Often — Guest James Standen discusses data profiling concepts and practices, and how bad data is often misunderstood and can be coaxed away from the dark side if you know how to approach it.

- Studying Data Quality — Guest Gordon Hamilton discusses the key concepts from recommended data quality books, including those which he has implemented in his career as a data quality practitioner.

Understanding your

Understanding your